Voice is awkward. It gets in its own way. People mumble, interrupt themselves, change their minds mid-sentence. Background noise wrecks models that work perfectly in a quiet demo. And yet — for clinicians in motion, on rounds, between patients, hands full — it is the right interface. There is nothing else that works.

The constraint

A resident on a morning round has about 8 minutes per patient. Of those 8 minutes, maybe 90 seconds is documentation. The rest is examination, conversation, and thinking. If your documentation tool asks the clinician to stop, open a laptop, and type — you have lost. You are competing with paper and pen, and paper and pen is winning.

So we went voice-first. Not voice-augmented, not voice-optional. Voice as the primary mode, with a typed fallback for when the model needs correction.

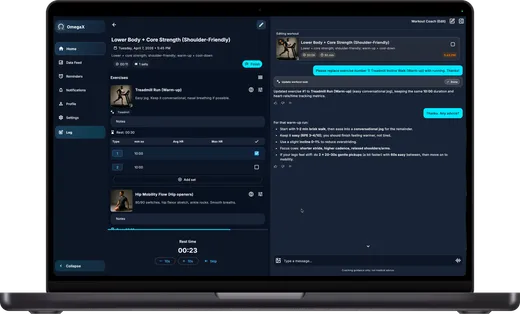

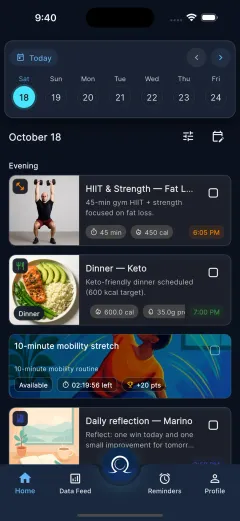

What we shipped

The first version was built around a simple loop:

- Clinician speaks into the OmegaX app during or right after a patient encounter.

- On-device VAD segments the audio into utterances.

- A streaming ASR transcribes each segment with low latency.

- A second model extracts structured fields — problem list, assessment, plan — from the transcript.

- The clinician reviews and signs.

Here's the skeleton of the streaming loop:

async function streamEncounter(stream: MediaStream) {

const session = new VoiceSession(stream);

for await (const utterance of session.utterances()) {

const transcript = await asr.transcribe(utterance);

await state.append(transcript);

const structured = await extractor.extract(state.current());

ui.render(structured);

}

}Simple enough on paper. In practice, every one of those five steps has edge cases we hadn't anticipated.

What we learned

Latency is a design question, not an engineering one

We started by optimizing ASR latency as an engineering problem — smaller model, more aggressive streaming, tighter inference pipeline. This helped, but only to a point. The real win was rethinking the UX around latency: we stopped trying to show transcripts in real time and instead showed a live-updating structured summary that caught up at natural pauses. Users reported the experience as "faster" even when measured latency was identical.

Noise robustness beats raw accuracy

In a quiet room, every modern ASR is good enough. In a corridor, with a pager going off and a colleague talking two feet away, the gap between models is enormous. We invested heavily in front-end noise handling (beamforming, residual echo suppression) and it paid off more than any model upgrade.

Trust is built on correction, not accuracy

Clinicians will tolerate surprisingly high ASR error rates — as long as errors are easy to spot and trivial to fix. The single biggest adoption lever we pulled wasn't accuracy, it was making the correction UI take one gesture.

What changes in v2

We're rebuilding the pipeline around two changes:

- On-device first. The privacy profile and the offline behavior are both materially better.

- Speaker diarization. Distinguishing clinician speech from patient speech matters a lot for structured extraction, and we've been doing it heuristically. Time to do it properly.

More on both once they ship.