An AI health agent is not useful because it can answer health questions. It is useful when it can turn a person's goals, context, and real day into the next practical action — then stay close enough to help them follow through, log what happened, understand the pattern, and adapt the plan.

That distinction matters. A chatbot can explain hydration, sleep, training, or nutrition. A search engine can return a list of tips. An AI health agent should do something narrower and harder: help a person move through the day with less drift between intention and action.

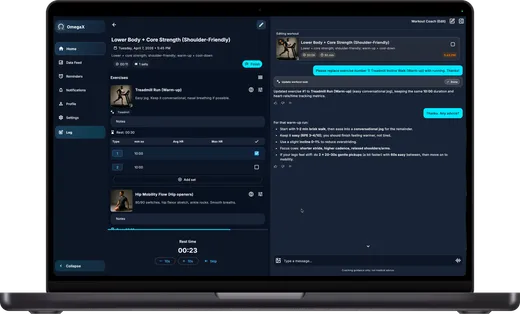

OmegaX uses the phrase AI health agent inside a broader Health OS frame. The agent is the surface a person talks to. The operating system is the structure underneath it: plans, reminders, logs, connected data, task flows, interpretations, and optional program or protection flows where the build and eligibility support them.

The agent starts with the day, not the advice

Most health software begins by asking the user to choose a feature: track a meal, log a workout, read a lesson, start a chat, connect a device. That model works when the user already knows what they need.

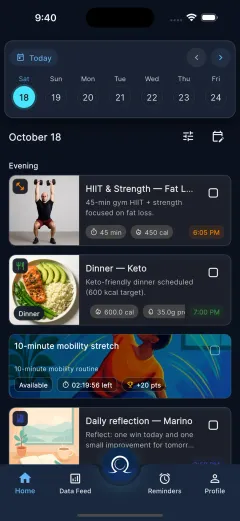

Real life is messier. The useful question is often not "What feature should I open?" It is "Given my goals, my schedule, my energy, and what happened yesterday, what should I do next?"

That is the first job of an AI health agent. It should translate context into a day plan. In OmegaX, that means a user can generate and regenerate daily plans, choose which content categories should be auto-generated, edit or reschedule tasks, and discuss the plan in context when the day changes.

The point is not to produce a perfect schedule at 7 a.m. The point is to keep the plan alive after the first version breaks.

A real AI health agent connects conversation to action

The weak version of an AI health agent is a chat window with health vocabulary. The stronger version connects conversation to product surfaces that can actually carry the user forward.

In OmegaX, the agent sits beside a real execution layer: Home for the day plan, Data Feed for logging and review, Reminders for recurrence and re-entry, Notifications for missed prompts and voice calls, and Profile and Settings for the deeper personalization model.

That matters because health follow-through is usually lost in the handoff. A person receives advice, closes the app, and has to remember the next step alone. An agent should reduce that handoff. If the user talks about training, the system should be able to route into a workout flow. If the user logs a meal, the system should be able to remember that context later. If the day slips, the system should be able to rebuild the next-best version instead of treating the missed plan as failure.

The agent is not just answering. It is routing.

Voice changes the relationship

Typing is fine when the user is sitting still. Health rarely stays there.

An AI health agent becomes more useful when voice is a first-class surface: live spoken sessions, call-style coaching behavior, missed-call review inside the notification inbox, and transcription-backed input where it fits. Voice makes the agent feel less like a form and more like an active companion.

That does not mean voice should replace every interface. A trustworthy product still needs visible review, clear controls, and quiet ways to correct or edit. But voice is important because it catches moments where typing is too heavy: after a workout, while cooking, during a walk, or when a clinician is trying to capture what happened without losing attention.

The interface choice is part of the product thesis. The easier it is to capture the day, the better the agent can adapt tomorrow.

The agent needs memory, but not mysticism

Personalization is where AI health agents can become vague. "It knows you" sounds impressive and says almost nothing.

The more concrete version is simpler. The agent should be shaped by goals, routines, preferences, constraints, health context, connected data, and the user's own logs. OmegaX's onboarding and profile model can include core profile details, goals and programs, motivations, obstacles, sleep windows, coaching style, connected health permissions, and health-context fields such as dietary preferences, medications, allergies, exercise preferences, and wellbeing context where relevant.

That does not make the agent a doctor. It gives the system enough structure to stop offering generic advice. A reminder for hydration, a workout task, a meal suggestion, a journal prompt, or a recovery adjustment should be grounded in the user's actual pattern, not in a universal wellness script.

Good memory is practical. It changes the next action.

Logging is not admin work; it is the feedback loop

If an agent cannot learn what happened, it cannot responsibly adapt what happens next.

That is why logging is not a side feature. OmegaX has a data layer for workouts, meals, sleep, hydration, weight, blood pressure, blood glucose, temperature, heart rate, steps, active energy, distance, body-fat percentage, mood, stress, energy, notes, journals, and other supported record types. It can also work with synced signals from supported integrations.

The important design question is not how many metrics exist. It is whether the user can turn lived events into useful feedback without feeling like a clerk. Manual entries, notes, images, connected health data, and structured task completions all become inputs into the same loop: decide, do, log, understand, adapt.

That loop is where an AI health agent earns the word agent.

It should explain patterns without pretending to diagnose

People do not only need raw charts. They need help understanding what the pattern might mean for the next decision.

OmegaX frames this as interpretation rather than diagnosis. The product can summarize progress drivers, friction points, and possible next actions through its insight layer. It should not pretend to replace clinical judgment, give medical advice, or diagnose conditions. The safer and more useful role is to help a user see their own pattern clearly enough to choose the next step or know when to escalate outside the app.

That boundary is not a legal footnote. It is part of the product's usefulness. An agent that overclaims becomes less trustworthy exactly when the stakes rise.

The agent can support optional program and protection flows

OmegaX should be explained as a health product first. The agent's daily job is coaching, execution, logging, interpretation, and adaptation.

Where the build, entitlement, program, legal structure, and readiness allow it, the same product logic can also connect to optional reward, eligibility, or acute-protect flows. The important word is optional. These surfaces extend the Health OS; they are not the default explanation of the consumer app.

That discipline keeps the agent understandable. A user should not have to understand protocol infrastructure before they understand what happens on Monday morning.

What to expect from a serious AI health agent

A serious AI health agent should pass a few plain tests:

- Can it turn context into a realistic plan for today?

- Can the user act on that plan inside the product?

- Can it capture what happened without making logging feel like punishment?

- Can it adapt when the user's day changes?

- Can it explain patterns without crossing into unsupported medical claims?

- Can it escalate clearly when the question is outside its scope?

If the answer is no, the product may still be a useful chatbot, tracker, or content app. It is not yet an agent in the sense that matters for health.

The promise of an AI health agent is not that every person gets infinite advice. The promise is that the distance between knowing what to do and actually doing it gets shorter. That is the product category worth building.